AI vs. Traditional Call-to-Action Testing

AI vs. Traditional Call-to-Action Testing

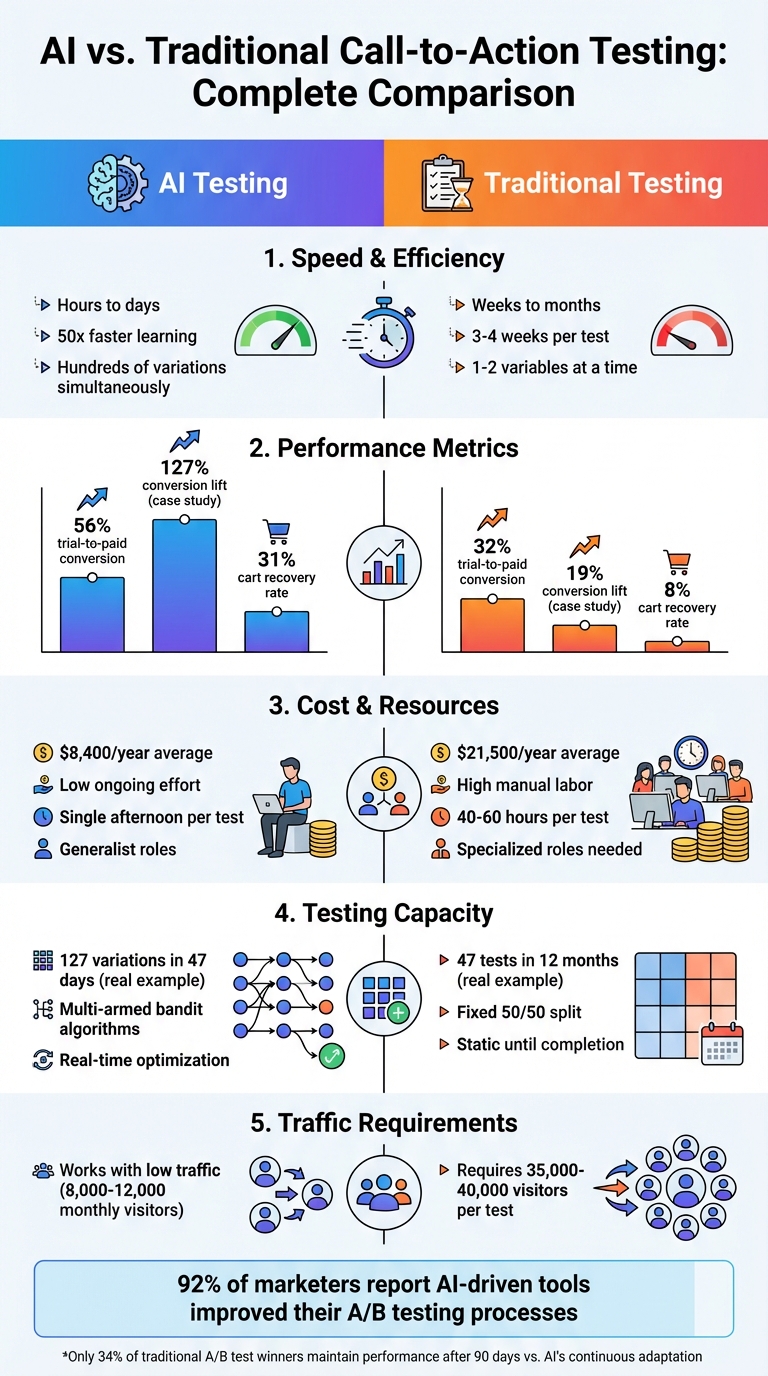

Want faster, better results for your call-to-action (CTA) tests? AI is changing the game. Here's why:

- Speed: AI evaluates hundreds of CTA variations in days (or even hours), while manual testing can take weeks or months.

- Cost: AI testing often costs less and delivers higher conversion rates, saving time and resources.

- Performance: AI dynamically adjusts CTAs based on user behavior, boosting conversions by up to 127% in some cases.

Example: A beauty brand using AI tested 127 CTA variations in 47 days, achieving a 127% lift in conversions. A similar brand using manual testing spent more money and 12 months for just a 19% lift.

Quick Comparison:

| Feature | AI Testing | Manual Testing |

|---|---|---|

| Speed | Hours to days | Weeks to months |

| Cost | Lower long-term costs | Higher labor costs |

| Testing Capacity | Hundreds of variations | 1-2 variables at a time |

| Conversion Lift | Up to 127% | ~19% |

AI is ideal for businesses with low traffic or complex testing needs, while manual methods may work for simpler, smaller campaigns. Combining both approaches can also balance efficiency and creativity.

AI vs Traditional A/B Testing: Speed, Cost, and Performance Comparison

This AI Analyzes Your Website Like a $10k Consultant [Tutorial]

sbb-itb-63525a7

Testing Speed and Efficiency

Traditional A/B testing can feel like a marathon. Each test typically takes 3–4 weeks to reach statistical significance, requiring around 35,000–40,000 visitors to validate just one hypothesis. Imagine a brand testing five different CTA elements - headline, button color, copy, placement, and design. Sequentially testing these could take four to five months, and if the campaign is more complex, it might stretch to 18 months. That’s a lot of time spent waiting for answers.

AI, however, flips the script by analyzing hundreds of variations simultaneously, delivering actionable results in a fraction of the time - sometimes in just hours or days. With a learning speed estimated to be 50 times faster, AI enables brands to gather hundreds of insights annually, compared to the handful traditional testing can provide.

How AI Speeds Up Testing

AI doesn’t just speed things up - it transforms the entire process. Traditional methods rely on evenly splitting traffic and waiting for a fixed sample size. In contrast, AI uses multi-armed bandit algorithms, which dynamically adjust traffic toward better-performing variants as soon as promising patterns emerge. This approach ensures fewer potential customers see underperforming CTAs, saving both time and opportunity.

Another game-changer? AI eliminates the manual work of creating test variations. Take Urban Bloom, for example. In August 2025, this direct-to-consumer indoor plant company used AI to generate 10 headlines, 5 descriptions, and 5 CTAs for their "Beginner's Plant Kit" campaign. With Facebook's Dynamic Creative AI, they tested all combinations simultaneously and identified the winning variant in just one week - three weeks faster than traditional methods. The results? A 30% higher click-through rate and a 22% lower cost per acquisition.

There’s also the case of an agency using Mnemonic AI in 2025. Over three weeks and 8,247 visitors, they compared AI-generated copy with manually crafted content. The AI-driven variant delivered a 40.3% conversion lift (from 2.8% to 3.9%) and achieved statistical significance (p-value = 0.004). This success highlights AI's ability to test bold, data-backed hypotheses that human intuition might overlook.

Why Manual Testing Takes Longer

Traditional A/B testing is inherently slow because it focuses on one element at a time, waiting for statistical significance before moving on. Each cycle requires 350–400 conversions per variant, which typically takes 3–4 weeks with moderate traffic.

The rigid traffic split - usually a fixed 50/50 distribution - means underperforming CTAs receive just as much exposure as the better ones throughout the test period. This inefficiency wastes valuable conversion opportunities. As Troopod.io puts it:

"The speed of learning determines the speed of growth. Traditional testing is too slow for modern competition".

This sluggish pace is why 92% of marketers report that AI-driven tools have improved their A/B testing processes. By compressing testing timelines from weeks to days - or even hours - AI allows brands to iterate faster, respond to market changes, and seize opportunities before they disappear. These limitations of manual testing underline why AI’s dynamic approach is a game-changer for staying competitive in today’s fast-moving markets.

Conversion Performance and Results

When it comes to conversion rates, AI doesn't just outperform traditional methods in speed - it delivers better results where it matters most. For example, AI-native companies boast a 56% trial-to-paid conversion rate, nearly double the 32% achieved by traditional SaaS companies. This isn't just a minor improvement; it's a game-changer for turning interest into revenue.

The advantages extend beyond conversion rates. AI-driven companies close deals faster - averaging 20 weeks compared to 25 weeks for traditional methods - and spend less per opportunity, around $8,300 versus $8,700. These results highlight how AI fine-tunes CTAs (calls-to-action) in real-time based on user behavior, a feat traditional testing struggles to replicate.

How AI Improves Conversion Rates

AI takes the guesswork out of optimizing CTAs by predicting winners before users even interact. By analyzing massive datasets, it identifies patterns that humans might overlook, creating personalized CTAs tailored to each visitor's intent. This ability to adapt on the fly often leads to a 10–15% increase in sales and a 10% reduction in customer churn for companies leveraging AI-powered personalization.

A great example of this is a Mumbai-based direct-to-consumer beauty brand. In late 2024, they achieved a 127% increase in conversion rates (from 1.4% to 3.18%) in just 47 days by testing thousands of CTA combinations simultaneously for around $8,500. Traditional methods would have struggled to uncover these insights so quickly.

AI also excels at recovering abandoned carts. By monitoring subtle user behaviors - like hesitation before closing a page - AI can trigger real-time overlays that re-engage users. This approach achieves a 31% recovery success rate, compared to just 8% for traditional post-abandonment emails.

What Traditional A/B Testing Delivers

Traditional A/B testing has its strengths, particularly when high statistical certainty is required. It works well for testing specific hypotheses or making permanent changes on low-traffic pages. With a fixed 50/50 traffic split and clear stopping points, traditional methods ensure reliable results by identifying the truly superior variant.

However, these methods often struggle with long-term consistency. A study of over 2,800 A/B tests found that only 34% of "winning" variants maintained their performance after 90 days. Factors like seasonality, shifting traffic sources, and evolving user behavior are tough for traditional testing to account for.

Interestingly, human intuition still plays an important role in certain scenarios. In September 2024, Norcross Mazda and Volvo Cars Memphis tested human-written ad copy against AI-generated versions. The human-written ads outperformed AI in two out of four campaigns, generating 900 more clicks, a 2% higher click-through rate, and reducing cost-per-click by 5 cents. This shows that while AI excels in rapid optimization, human creativity and emotional nuance remain crucial for brand storytelling and trust-building.

Performance Comparison Table

| Metric | AI-Optimized Testing | Traditional A/B Testing |

|---|---|---|

| Trial-to-Paid Conversion | 56% | 32% |

| Sales Cycle Length | 20 weeks | 25 weeks |

| Cost per Opportunity | $8,300 | $8,700 |

| Abandonment Recovery | 31% success rate | 8% success rate |

| Testing Capacity | Hundreds of variables | 1–2 variables at a time |

| Speed to Results | Hours to days | Days to weeks |

| Long-Term Stability | Continuous adaptation | 34% of winners maintain lift |

Handling Testing Complexity

Managing testing complexity highlights another area where AI optimization shines compared to traditional methods. While A/B testing is effective for straightforward comparisons - like choosing between a red or blue button - it struggles when multiple variables need to be tested simultaneously. AI, on the other hand, thrives in these complex scenarios, offering faster and more accurate results.

AI's Multi-Variable Testing Capacity

AI can analyze hundreds - or even thousands - of variations at the same time. Imagine you want to test 12 headlines, 8 images, and 6 calls-to-action (CTAs). That’s 5,760 possible combinations. AI runs these tests simultaneously, capturing how different elements interact with each other, and delivers insights in days instead of months.

What sets AI apart is its ability to detect interactions between variables. For instance, a headline that performs well on its own might actually hurt conversions when paired with specific images or CTAs. Traditional testing methods often miss these nuances, but AI identifies these patterns with precision.

Traditional A/B Testing Constraints

A/B testing, by design, focuses on one variable at a time. This simplicity becomes a major limitation when dealing with more complex scenarios. Victor Kostyuk, Head of Engineering at Braze, explains:

"An A/B test only lets you test one variable at a time. What if you want to test five different headlines, three different descriptions, and four different calls-to-action? To test all possible combinations of those would require 60 different ad variations".

Beyond this, traditional A/B testing demands large sample sizes. To detect meaningful differences, you’d need around 35,000–40,000 visitors. This makes it nearly impossible to conduct complex tests on low-traffic pages or niche audiences. It’s no surprise that while 77% of companies globally run A/B tests, only 22% attempt multivariate testing due to the high traffic requirements and added complexity.

Another challenge? Winners identified in isolation often fail when combined. For example, a Bangalore-based electronics company tested an "Urgent Headline" (+18%), "Premium Imagery" (+12%), and "Scarcity CTA" (+9%). While each performed well individually, combining them caused a 4% drop in conversions because the aggressive tone conflicted with the high-end visuals. These limitations make traditional testing less effective for comprehensive optimization, especially when multiple elements interact in unexpected ways.

Cost, Expertise, and Resources

When comparing AI-driven testing to traditional A/B testing, it's clear that cost and resource allocation play a key role. While traditional testing may appear cheaper at first, the ongoing manual effort can quickly increase expenses. On the other hand, AI-driven methods require a larger initial investment but significantly reduce long-term labor demands.

AI Investment and Returns

AI testing tools often come with upfront costs and require a minimum ad spend to operate effectively. However, the return on investment (ROI) typically makes up for these expenses. The real advantage lies in time savings and increased efficiency. As Eliot Knepper, Co-Founder of Mnemonic AI, puts it:

"Five years ago, running twenty variations of a landing page would have required a month of copywriter and designer time. Today, with AI-augmented workflows, it takes an afternoon".

Take Panera Bread as an example. In April 2024, they used AI-powered decision-making integrated with Braze to manage personalized cross-channel menu campaigns. This saved the team over 50 hours of manual work and resulted in a 5% lift in retention for at-risk guests, along with a 2X boost in loyalty offer redemptions. Similarly, BUGECE, a music and events platform, leveraged AI for intelligent message timing, achieving a 63% increase in email open rates and a 32% lift in signup conversions.

AI also helps smaller teams bridge skill gaps. Peter Lowe from Crazy Egg highlights this point:

"The net effect is that a full-fledged A/B testing program is now within reach for teams that don't have dedicated copywriters, designers, and developers".

Traditional Testing Expenses

Traditional A/B testing may have lower initial costs, with many tools being free or inexpensive. However, the manual labor required can become a significant expense. Each test typically involves copywriters, designers, and developers, consuming anywhere from 40 to 60 hours of skilled labor before factoring in data analysis and monitoring.

For small businesses with ad budgets under $5,000 per month, manual testing might work well for smaller campaigns. But as testing grows more complex, so do the costs. For instance, in 2025, a Mumbai-based D2C beauty brand spent ₹4.5 lakhs per quarter (roughly $13,500) on a traditional CRO agency. Over a year, they ran 47 tests, but only 4 resulted in long-term "wins" after 30 days, leading to a 19% conversion lift. In contrast, another brand using AI optimization spent ₹7 lakhs total (approximately $21,000) in the first year. They tested 127 variations simultaneously, achieving a 127% conversion lift in just 47 days.

Cost and Resource Comparison Table

The differences in cost and resource demands are summarized in the table below:

| Feature | AI-Driven Testing | Traditional A/B Testing |

|---|---|---|

| Upfront Cost | Higher (software fees + technical setup) | Lower (basic tools + existing staff) |

| Ongoing Effort | Low (monitoring and parameter adjustments) | High (manual creation and monitoring) |

| Staff Requirements | Generalist/managerial roles | Specialized (copywriters, designers, analysts) |

| Speed to Results | Hours to days | Days to weeks |

| Scalability | High (multiple variables tested simultaneously) | Low (limited to 1–2 variables per test) |

| Time Per Test | Single afternoon with AI workflows | 40–60 hours of skilled labor |

| Expertise Needed | Moderate technical setup | High statistical and creative expertise |

While traditional testing may save money upfront, its reliance on human resources makes it more costly over time. AI-driven testing, though requiring a larger initial investment, frees up teams to focus on strategic priorities and delivers faster, scalable results. For businesses looking to grow, the ability to test thousands of variations efficiently ensures better long-term outcomes, making AI a vital tool for startups and SMBs alike.

Real-Time Optimization Capabilities

The key difference between AI and traditional testing lies in how they handle live data. Traditional A/B testing operates within a fixed framework, locking variables and waiting until the test ends. AI, on the other hand, reacts to user interactions in real time, continuously adjusting CTAs based on live performance. Here’s a closer look at how these two approaches stack up.

How AI Adjusts CTAs in Real Time

AI leverages Multi-Armed Bandit (MAB) algorithms to dynamically allocate traffic to better-performing CTAs. Instead of sticking to a 50/50 split, it can shift traffic to a 90/10 ratio as soon as it identifies a strong performer. This minimizes the exposure to underperforming CTAs.

What makes AI particularly powerful is its ability to adapt on the fly. If a CTA that worked well during weekdays starts underperforming on weekends, AI can adjust traffic allocation accordingly. It doesn’t stop there - AI also tracks micro-behaviors like mouse movements, scroll pauses, and rage clicks to predict abandonment up to 30 seconds in advance. This allows it to trigger personalized popups or offers in real time.

Additionally, AI tailors CTAs based on factors like location, time, device, and traffic source. For example, a mobile user visiting from Instagram at night might see a different CTA than a desktop user arriving from Google in the afternoon. AI systems can even optimize the timing of push notifications or emails to ensure they land when the user is most likely to engage. As Bart Leusink, Director of Data Science at BlueConic, explains:

"What if you didn't have to wait? What if your site could learn and adapt in real time? That's the promise of agentic personalization".

This kind of adaptability offers a stark contrast to the rigid structure of traditional testing.

Traditional Testing's Fixed Approach

Traditional A/B testing, while effective in its time, lacks the flexibility to respond to changing user behavior. Once a test is set up, traffic is split evenly between variants, and the process can take weeks to reach statistical significance. During this period, many users may interact with a less effective CTA, potentially impacting conversions.

Even after a winner is declared, the results often reflect outdated user behavior. By the time the winning CTA is implemented, market conditions or consumer preferences may have shifted. A study of 2,847 A/B tests found that only 34% of declared winners maintained their performance advantage after 90 days, with 41% regressing and 7% eventually underperforming compared to the original control.

Traditional testing also focuses on identifying a single "best" variant for the average user, ignoring individual differences. This one-size-fits-all approach misses opportunities to tailor experiences for unique visitors.

| Feature | Traditional A/B Testing | AI Real-Time Optimization |

|---|---|---|

| Traffic Allocation | Fixed (e.g., 50/50) until the test ends | Dynamic - shifts from 50/50 to 90/10 as data identifies a leader |

| Test Duration | Fixed (weeks or months) | Continuous; adjusts indefinitely |

| User Experience | Static; all users see the same variant | Personalized; adapts CTAs in real time |

| Response to Trends | Reactive; requires manual updates post-test | Proactive; adjusts instantly to performance changes |

| Risk Mitigation | High; many users exposed to weaker variants | Low; traffic quickly shifts away from underperforming CTAs |

Platforms like BrandMultiplier.ai are taking full advantage of these AI-driven capabilities to refine CTAs continuously, ensuring every visitor gets the most relevant and timely interaction.

The difference is clear: while traditional testing waits for final results and manual decisions, AI optimizes in real time, making it a powerful tool for businesses looking to maximize every visitor’s potential. Real-time optimization isn’t just a feature - it’s a game-changer.

Choosing the Right Method for Your Business

Deciding between AI-driven and traditional A/B testing depends on factors like traffic volume, budget, and how quickly you need results. Traditional A/B testing works well for high-traffic websites, typically requiring 3–4 weeks to achieve statistical significance. However, for smaller businesses with lower traffic (around 8,000–12,000 monthly visitors), traditional methods can take years to clear optimization backlogs - something AI-driven solutions can tackle in just a few months.

Budget is another key consideration. A traditional CRO agency costs around $21,500 annually, while an AI-powered platform averages about $8,400. Case studies consistently show that AI not only reduces costs but also boosts conversion rates compared to traditional methods.

If your ad spend is below $5,000 per month and you're testing straightforward elements like button colors or headline copy, traditional methods might provide the control you need. But for businesses managing multiple channels, testing numerous CTAs, or dealing with low traffic, AI becomes essential. As Eliot Knepper, Co-Founder of Mnemonic AI, puts it:

"The future of conversion optimization isn't human versus AI. It's human with AI, systematically applied".

Combining these approaches can also deliver strong results. A hybrid strategy uses AI for generating hypotheses and testing variations while keeping human oversight for strategy and creative direction. This is particularly useful for SMBs transitioning to AI, as it balances the speed and scalability of AI with the need to maintain brand consistency. Tools like BrandMultiplier.ai integrate AI-driven optimization with strategic oversight, ensuring CTAs align with your brand voice while adapting in real time. Setting guardrails - such as brand voice guidelines, frequency caps, and compliance rules - helps prevent AI-generated content from feeling generic or misaligned.

FAQs

How much traffic do I need for AI CTA testing?

The amount of traffic you need for AI-driven CTA testing largely depends on your goals and the type of test you're running. To get reliable results, it's best to focus on high-traffic pages. These pages can help you reach statistically significant outcomes faster.

The key is ensuring enough visitors interact with your CTA variations to provide meaningful insights. While there’s no hard-and-fast rule for the exact traffic volume, prioritizing pages with a steady flow of visitors increases the chances of achieving reliable results within your preferred timeframe.

Will AI testing hurt my brand voice?

AI testing can work seamlessly with your brand voice when approached carefully and paired with human oversight. While there's a chance that AI might churn out content that feels too generic, this can be avoided by setting clear guidelines that safeguard your brand's unique tone and style. Think of AI as a tool to expand and refine your messaging - not as a substitute. By using AI responsibly, you can amplify your voice, maintain consistency, and discover what truly connects with your audience.

When should I use a hybrid AI + A/B approach?

A hybrid AI + A/B testing approach merges the speed and automation of AI with the depth of insights from traditional A/B testing. This combination simplifies test design and analysis, allowing for quicker, more scalable, and highly tailored experiments. It's particularly effective in situations where manual testing might be sluggish or prone to bias. AI can evaluate multiple hypotheses at once and adjust dynamically, leading to smarter decisions and better conversion rates.

Ready to transform your brand story?

Schedule a free diagnostic to see how we can help.

Schedule The Diagnostic